How to Understand, Price, and Package a Token-Based Offer

The New Math of AI

Hi, I’m Kyle Kelly. Each week Line of Sight breaks down how AI, strategy, and revenue growth architecture turn complexity into leverage.

I have spent nearly two decades building commercial systems where pricing and cost structure were not finance problems, they were survival problems. At Aurora, I ran strategic growth and partnerships in a market where mismodeling the cost of a financing product by even a few basis points could destroy a deal. That same pattern is playing out right now in AI, at a much faster clock speed.

By the end of this read, you will understand what your AI product actually costs to deliver, why that number is probably two to five times what you think, and how to build the financial model that closes the gap.

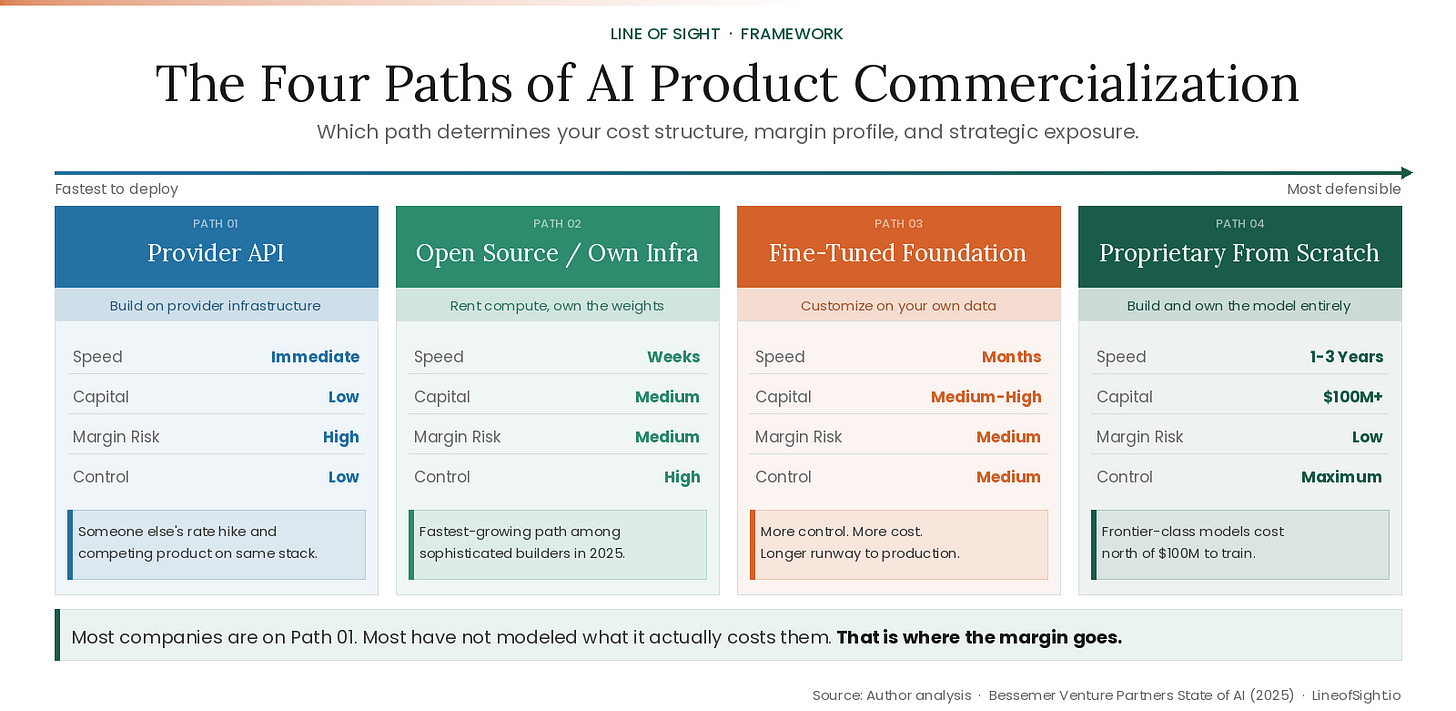

Every company building with AI right now is on one of four paths.

Which one you are on determines your cost structure, your margin profile, and your strategic exposure. Most companies are on path one. Most have no idea what path one actually costs them.

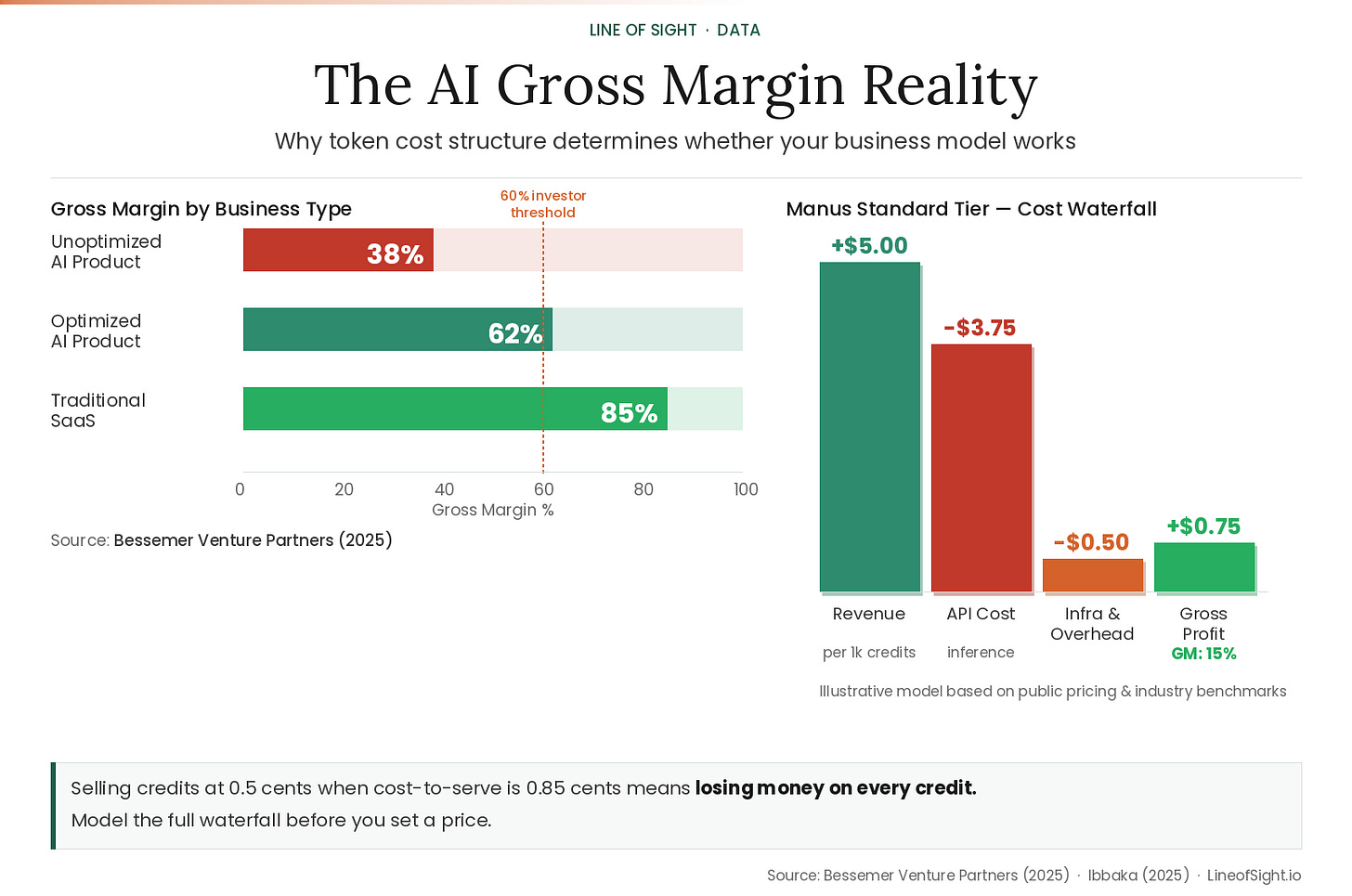

The fastest-growing AI startups are running at 25% gross margins. Sometimes negative. The healthier cohort operates closer to 60%. Traditional SaaS sits at 80-90%. Bessemer Venture Partners documented this spread in their State of AI 2025 report [1]. The cause is physics: every inference call has a price. Every generated sentence incurs real compute cost that traditional SaaS never faced.

Here is what each path actually means.

Path one: use an existing API. Fast, flexible, and someone else’s infrastructure decision. Also someone else’s pricing decision, their rate hike, and increasingly their competing product built on the same stack. One important note: path one on frontier models carries rising cost exposure. GPT-4o and Claude 3.5 class models cost more per token than their predecessors in many use cases, particularly for long-context and reasoning-heavy tasks. The inference cost curve is falling for older models. It is not falling uniformly across the stack.

Path two: run open source models on your own infrastructure. Rent the compute, own the weights. Lower margin risk than API dependence, lower capital than a proprietary build. The fastest-growing path among sophisticated builders right now, for exactly the margin reasons described in this piece.

Path three: customize a foundation model on your own data. More control, more cost, and a longer runway to production.

Path four: build proprietary from scratch. A serious 30-billion parameter model starts at $5-10 million in compute alone. A frontier-class model costs north of $100 million to train. Billion-dollar runs are coming before the end of 2026.

Most companies are on path one. Most have not yet modeled what path one actually costs them per transaction, fully loaded, including every token their product consumes that a user never sees.

That is where the margin goes.

The Token Is the New Unit of Production

In traditional SaaS, the marginal cost of serving one more user approaches zero. In AI, it does not.

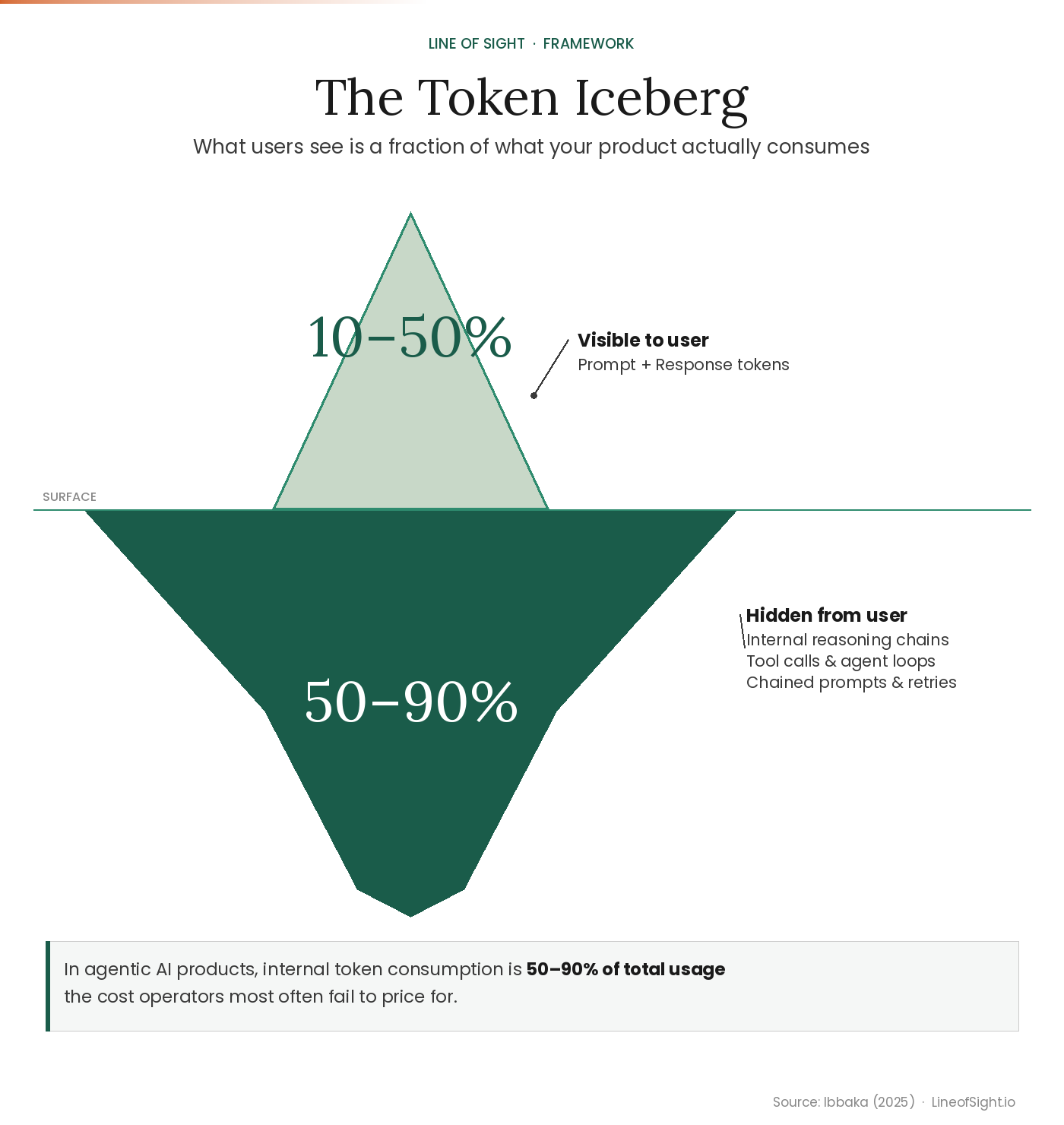

A token is roughly 0.75 words or 4 characters. A typical user interaction costs 200-300 tokens on the surface. The real number is often ten times that once you account for the full system. This is the Token Iceberg, and most operators only price for the visible tip.

Before you set a single price, answer one question: what is your actual token flow? That means every system prompt, every reasoning step, every agent loop and retry your product runs in the background. In agentic AI products, internal token consumption is 50-90% of total usage, the cost operators most often fail to model.

The breakdown matters more than the total. A product with 800 tokens of visible consumption and 7,200 tokens of internal consumption does not have a usage problem. It has a pricing problem, because the cost structure is almost certainly invisible to the team setting the price.

Make sure the Math is Math-ing.

Work through your own numbers with the Token Flow Calculator at kylekelly.co/tools/token-calculator.

What Surviving Companies Did Differently

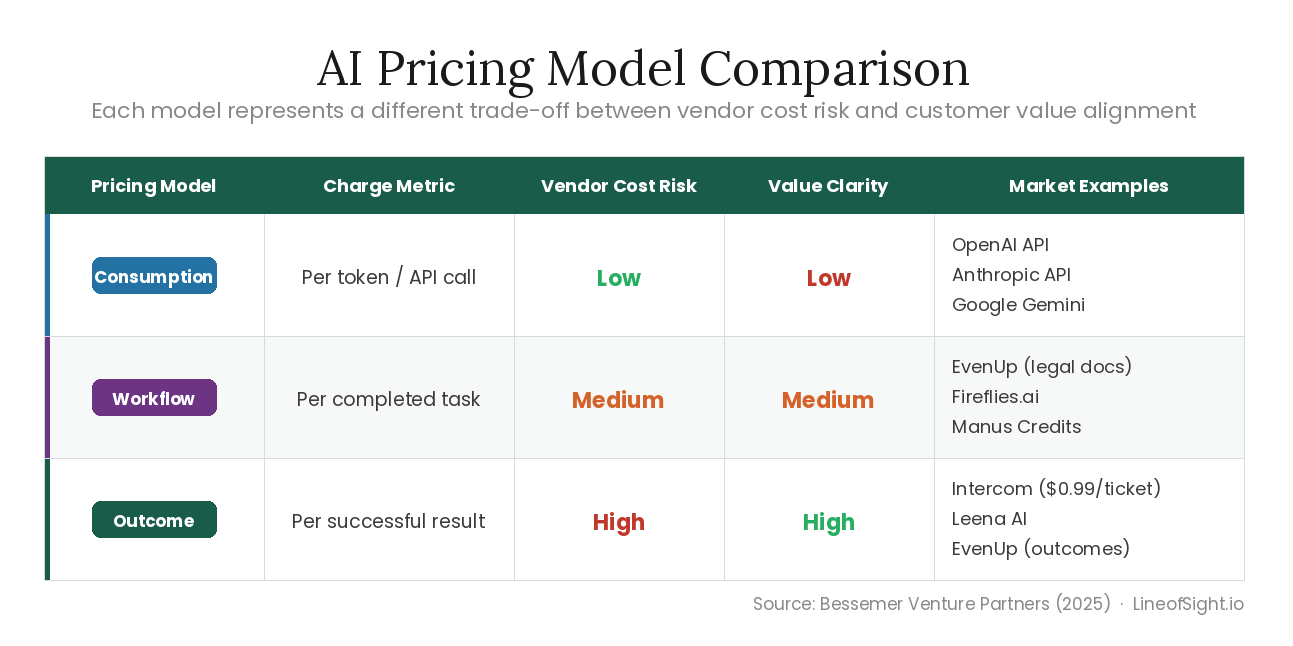

Three pricing models have emerged. Each represents a different trade-off between vendor cost predictability and customer value alignment.

As you move from consumption to outcome-based pricing, you accept more cost risk in exchange for tighter alignment with customer value. Most early-stage AI companies default to consumption because it is the easiest to implement. It is also the easiest for customers to abandon when spend becomes unpredictable.

The most defensible commercial position is outcome-based pricing, but only if you have fully loaded your cost model first. Intercom charges $0.99 per resolved support ticket. That model works because they built the cost structure before setting the price, not after. Build the model wrong and outcome pricing becomes a liability, not an advantage.

The Credit Is an Abstraction Layer, Not a Cop-Out

Credits exist because the true unit of AI production (the token) is too granular for customers to reason about. A credit is a pricing wrapper that converts technical consumption into commercial clarity. But credits solve a communication problem, not a financial one. Your internal cost structure still runs on tokens.

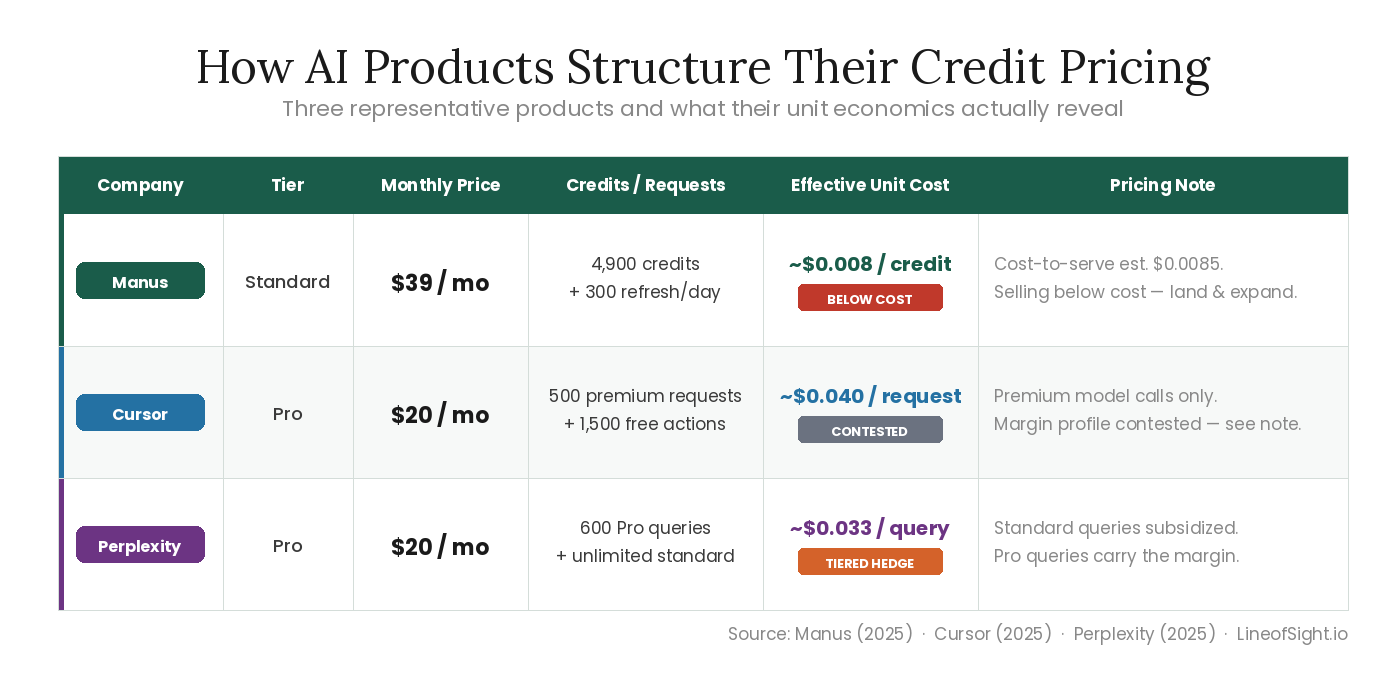

Here is how three representative products have structured it:

The Manus Standard tier reveals the gap most clearly. At 0.5 cents per credit, with a cost-to-serve closer to 0.85 cents, they are selling below cost at current pricing. That is a deliberate land-and-expand play. It only works if two things happen before the runway runs out: engagement deepens enough to justify a price increase, and volume grows enough to negotiate better infrastructure rates. Most companies running this playbook will not hit both.

Cursor’s margin profile is contested. Multiple credible sources reported negative gross margins in mid-2025, with significant API costs against subscription revenue. Their trajectory is a useful signal regardless of the exact number: even products with strong PMF and rapid growth can face structural margin pressure when token consumption scales faster than pricing catches up.

The Four Numbers That Determine Whether You Make It

A rigorous operator does not set price from intuition. They build a model that converts token costs into commercial decisions. There are four layers to that model, and most operators only think about one.

Layer 1: Revenue Architecture

The revenue line is more complex than it looks. Subscription revenue anchors too low in almost every product I have analyzed, because operators underestimate how much credit consumption scales with engagement. The operators getting this right are building four revenue streams, not one: subscription, overage, tier upsells, and enterprise contracts. Each has a different margin profile. Each needs to be modeled separately before you set a blended price.

Layer 2: Cost of Goods Sold

Three line items most operators miss entirely.

Inference costs include every token type your product consumes, not just the visible input and output. Internal reasoning steps, agent loops, and retry logic are all billed at the same rate as user-facing tokens. If you have not instrumented this, you do not know your COGS.

Infrastructure overhead runs 10-15% of inference cost annually across a typical AI product stack: hosting, monitoring, logging, security. This is not a rounding error at scale.

Third-party API costs are the line item teams most often forget. Every search call, vector database query, or external tool call in your agentic workflow has a cost. Even $0.002 per transaction compounds significantly at 200,000 monthly transactions.

Your gross margin is the gap between what you charge per credit and what every token in that credit actually costs you to produce. That gap is almost always smaller than operators expect.

Layer 3: The Gross Margin Reality

The 60% gross margin threshold matters because it is the level at which LTV:CAC math becomes defensible for most institutional investors. It is a target, not a gate. The Bessemer Supernova cohort runs at 25% and still attracts capital because growth rate can temporarily substitute for margin in early stages. [2] The question is not whether you are at 60% today. It is whether you have a credible path to it before your runway forces the conversation.

If you are selling credits at $0.005 and your cost-to-serve is $0.0085, your gross margin is negative before you pay for a single sales call or engineer. Model the full waterfall before you set a price.

Better pricing buys you runway. It does not fix a broken cost structure at the model layer. That requires volume, routing, or your own model. But most operators are not close to that problem yet. They are still losing margin to costs they have not measured.

Layer 4: The Questions That Expose Your Real Risk

Most pricing conversations focus on what to charge. These four questions focus on what you are not accounting for. Operators who answer them before launch live in a different risk category than those who discover them after.

What is the fully loaded cost of your most expensive task type, including abnormal reasoning chains? Most cost models are built on average usage. Power users and edge cases destroy average-based pricing.

Do you currently lose money on your heaviest users, and do you know who they are? If you do not have this number, you do not have a cost model. You have a guess.

What happens to your gross margin if your model provider raises API costs 20%? This is not a hypothetical. Anthropic introduced Priority Service Tiers in 2025 that doubled infrastructure costs for some enterprise clients overnight. If your pricing does not survive a 20% cost shock, you have a dependency, not a strategy. This is also where open source infrastructure changes the math permanently.

What external APIs or agents are embedded in your standard workflow that you are not yet billing against? Every third-party tool call in your product stack has a cost. Most operators only discover this at scale.

Where This Is Going

The companies sustaining 60% gross margins share one behavior: they modeled the full cost stack before pricing, not after. The operators who survive will not do so by building better models. They will do so by building better pricing systems.

The token is the ingredient. The outcome is the product. Price accordingly.

References

State of AI, Bessemer Venture Partners, Sep 2025

The Gross Margin Debate in AI, Tanay Jaipuria, Sep 2025

Cursor Pricing, 2025

Manus Pricing, 2025

Perplexity Pricing, 2025

GPT-4 Training Cost, Sam Altman / Stanford AI Index, 2024

Frontier Model Cost Projections, Dario Amodei / Fortune, Apr 2024

Inference and Compute Costs, Scale AI Foundation Model Report, Aug 2025

Custom LLM Build Costs 2025, Follow.life / Epoch AI Analysis, 2025